GSMA Releases Experience Specifications for AI Calling Native Applications

At the 5G Futures Summit hosted by GSMA

during MWC Barcelona 2026, GSMA released the white paper Gigauplink, Deterministic Latency, and Network Evolution for

the Mobile AI Era. The white paper outlines the development and

evolution trends, application scenarios, and business models for operators'

native voice services in the mobile AI era. It also elaborates on

specifications for evaluating AI Calling experiences, helping operators build

voice experience-centric networks and significantly improve the user

experiences of voice services.

The white paper points out that, driven by the synergy between

5G-A and AI, mobile communications have entered the mobile AI era. Operators

are transforming native voice services from conventional voice calls into AI

voice calls. By integrating AI algorithms and computing power into the native

IMS voice network, conventional voice calls are evolving towards enhanced

services and innovative applications. This evolution will bring users stable,

HD, visual, intelligent, and efficient next-generation calling experiences.

Emerging AI calling services, such as AI immersive calling and AI interactive

calling, pose new requirements on network connectivity and AI capabilities.

According

to the white paper, AI-based noise reduction is a typical application of AI

immersive calling. By leveraging AI algorithms to eliminate ambient noises in

various scenarios, operators can deliver clearer native calls and provide users

with more immersive experiences. The AI-based noise reduction algorithms can be

used in various scenarios, such as offices (noise level > 40 dB), streets

(noise level > 60 dB), and construction sites (noise level > 80 dB) to

enable users to enjoy high-quality voice services without relying on terminals.

AI-powered real-time translation is a typical application of AI interactive

calling. Thanks to the enhancement of voice network capabilities, longstanding

language barriers are being eliminated. AI Calling can provide accurate and

real-time voice transcription or translation during video calls, effectively

helping business people who participate in international online conferences,

tourists who travel to foreign countries, and people with hearing impairments.

As

highlighted in the white paper, operators can integrate AI capabilities into

native voice services to upgrade the business model, infusing daily calling

with new vibrancy. Users can enjoy AI-driven enhanced functions during

conventional calls after paying their subscription, enabling operators to

transform single-dimensional traffic monetization into multidimensional

experience monetization.

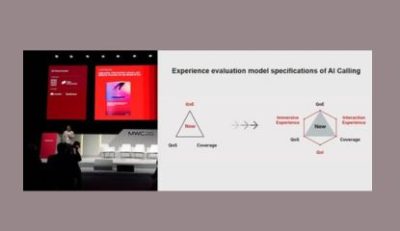

In

AI Calling scenarios, how to measure user experiences is a new challenge for

operators. The white paper systematically defines the experience evaluation

model specifications of AI Calling. In addition to three experience indicators

(QoE, QoS, and coverage) of conventional HD voice services, another three

indicators—AI immersive experiences, AI interactive experiences, and QoI—are

added to the experience evaluation model specifications of AI Calling.

Immersive calling can greatly improve the user experiences of basic voice

calls. For example, the MOS and SNR are significantly increased. Interactive

calling requires the network to be equipped with new interaction channels and

capabilities, including Data Channel (DC) and Video Channel (VC), delivering

enhanced experiences, such as screen sharing, real-time translation, and

interaction with agents. QoI is a key indicator for measuring the intelligence

of the voice network. The measurement encompasses high-quality AI models,

flexible AI management, AI-based network/user status awareness and

decision-making, and inclusive AI service capabilities. These can provide basic

network assurance for voice experience upgrades.

The

ITU has initiated a work project named P.AI-MOS to evaluate the user

experiences of multimodal AI applications, while the proposals for AI Calling

voice experience standards are under research. To accelerate the development of

the experience evaluation model, GSMA and industry partners are calling for

collective efforts to establish rules that map the key quality indicators

(KQIs) of AI applications to the key performance indicators (KPIs) of networks.

These efforts aim to fast-track the formulation of mobile AI service experience

standards, providing stronger support for the advancement of the mobile AI

industry.

Leave A Comment